Distribution Divergences

| Tags |

|---|

KL divergence

KL divergence is an asymmetric measure of distribution separation

- Decomposes to log likelihood in certain situations

- Two very different objectives depending on which way you write the KL divergence

F-divergences

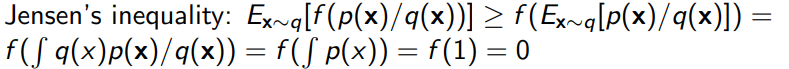

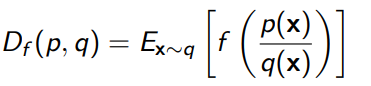

Given two densities, we define a general f-divergence as

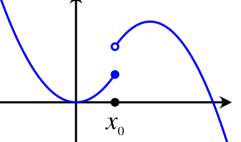

where is any convex, lower-semicontinuous function with . Lower-semicontinuous basically means that around , every point that is below must be continuous. Every point above is fine. This looks like the following diagram:

Properties of F-divergences

Always greater than zero

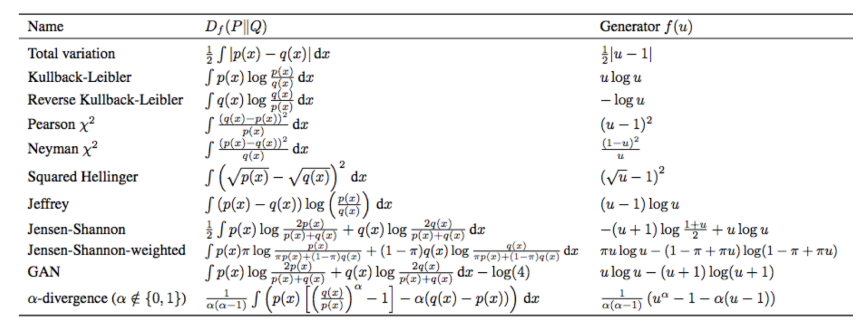

Examples of F-divergences

There are so many types of F-divergences, with a common type being KL divergence, where (careful! It’s not because it’s , not . ) Total Variation is also a common one, where the